Difference between revisions of "Argus standard image processing"

(→Geo-referencing of oblique video data) |

Dronkers J (talk | contribs) |

||

| (21 intermediate revisions by 5 users not shown) | |||

| Line 1: | Line 1: | ||

| + | {{Review|name=Dhuntley |AuthorID=13392}} | ||

| + | This article describes how [[data]] collected with an [[Argus video monitoring system]] can be converted from image to field co-ordinates. The article provides also an introduction into Argus databases and merging of images. | ||

| + | |||

== Geo-referencing of oblique video data == | == Geo-referencing of oblique video data == | ||

| − | + | Quantification of image features like sand bars, [[shoreline|shorelines]] and foam patterns demands the conversion of image coordinates (u,v) to field coordinates (x,y,z). This conversion depends on the camera location (xc,yc,zc), the camera orientation defined through three camera angles, namely the tilt, the [http://en.wikipedia.org/wiki/Azimuth azimuth] and the roll, and the effective focal length, which directly relates to the camera horizontal field of view. The tilt, the [http://en.wikipedia.org/wiki/Azimuth azimuth] and the roll represent the rotation with regard to the vertical z-axis, the orientation in the horizontal xy-plane and the rotation of the focal plane with respect to the horizon, respectively. The transformation of field to image coordinates is thus a rotation about the three angles and can be expressed by the linearised version of the collinearity equations, following standard photogrammetric procedures, as follows (Holland et al., 1997<ref name=Holl1997>Holland, K.T., Holman R.A., Lippmann T.C., Stanley J.and Plant N.G. (1997). Practical use of video imagery in nearshore oceanographic field studies. ''IEEE Journal of oceanic engineering'', Vol. 22, No. 1.</ref>): | |

| − | Quantification of image features like sand bars, shorelines and foam patterns demands the conversion of image coordinates (u,v) to field coordinates (x,y,z). This conversion depends on the camera location (xc,yc,zc), the camera orientation defined through three camera angles, namely the tilt, the azimuth and the roll, and the effective focal length, which directly relates to the camera horizontal field of view. The tilt, the azimuth and the roll represent the rotation with regard to the vertical z-axis, the orientation in the horizontal xy-plane and the rotation of the focal plane with respect to the horizon, respectively. The transformation of field to image coordinates is thus a rotation about the three angles and can be expressed by the linearised version of the collinearity equations, following standard photogrammetric procedures, as follows (Holland et al., 1997<ref name=Holl1997>Holland, K.T., Holman R.A., Lippmann T.C., Stanley J.and Plant N.G. (1997). Practical use of video imagery in nearshore oceanographic field studies. ''IEEE Journal of oceanic engineering'', Vol. 22, No. 1.</ref>): | ||

[[Image:Equation1.png|thumb|300px|left|Equation 1<ref name=Holl1997/>]] | [[Image:Equation1.png|thumb|300px|left|Equation 1<ref name=Holl1997/>]] | ||

[[Image:Equation2.png|thumb|300px|none|Equation 2<ref name=Holl1997/>]] | [[Image:Equation2.png|thumb|300px|none|Equation 2<ref name=Holl1997/>]] | ||

| − | + | <br style="clear:both;"/> | |

| − | |||

The coefficients L1 to L11 are linear functions of the camera orientation (three angles), the camera position (xc,yc,zc) and the effective focal length. The inverse transformation from image to field coordinates results in a system of two equations with three unknowns. The z-coordinates are assumed in this transformation to match a certain horizontal reference level or the tidal water level (see Figure 1). | The coefficients L1 to L11 are linear functions of the camera orientation (three angles), the camera position (xc,yc,zc) and the effective focal length. The inverse transformation from image to field coordinates results in a system of two equations with three unknowns. The z-coordinates are assumed in this transformation to match a certain horizontal reference level or the tidal water level (see Figure 1). | ||

| − | Standard photogrammetric procedures (Holland et al., 1997<ref name=Holl1997/>) enable the transformation from real world coordinates (x,y,z) to image coordinates (u,v) on the basis of the collinearity equations (Eq. 1 and 2).The latter contain 11 coefficients L1-L11, which are described in Equation 3. In Eq. 3, (x,y,z) are the camera xyz-coordinates, (u0,v0) are the image center uv-coordinates, f is the effective focal length and λu and λv are the horizontal and vertical scale factors. The m-coefficients describe the successive rotations around the azimuth, tilt and roll | + | Standard photogrammetric procedures (Holland et al., 1997<ref name=Holl1997/>) enable the transformation from real world coordinates (x,y,z) to image coordinates (u,v) on the basis of the collinearity equations (Eq. 1 and 2).The latter contain 11 coefficients L1-L11, which are described in Equation 3. In Eq. 3, (x,y,z) are the camera xyz-coordinates, (u0,v0) are the image center uv-coordinates, f is the effective focal length and λu and λv are the horizontal and vertical scale factors. The m-coefficients describe the successive rotations around the [http://en.wikipedia.org/wiki/Azimuth azimuth], tilt and roll (see Equation 4). |

| − | |||

| − | |||

| + | The theoretical formulations presented in Eq. 4 are valid for use with distortion-free lenses. Owing to the incorporation of λu and λv, the formulations embody a correction for the slightly non-squareness of individual pixels. The latter is induced by a minor difference in sampling frequency between the camera and image acquisition hardware, which causes a minor mismatch between the number of horizontal picture elements at the camera CCD and the number of columns at the image frame buffer, where the image processing system stores the video data. | ||

[[Image:Equation3.png|thumb|250px|left|Equation 3<ref name=Holl1997/>]] | [[Image:Equation3.png|thumb|250px|left|Equation 3<ref name=Holl1997/>]] | ||

| − | [[Image:Equation4.png|thumb|250px| | + | [[Image:imageVSrealworld.png|thumb|350px|left|Figure 1: Relation between image (u,v) and real world (x,y,z) co-ordinates]] |

| + | [[Image:Equation4.png|thumb|250px|left|Equation 4<ref name=Holl1997/>]] | ||

| + | <br style="clear:both;"/> | ||

== Argus Database == | == Argus Database == | ||

| − | + | The Argus database of meta information (hereafter referred to as argusDB) is a key-component of the Argus Runtime Environment. Without argusDB, no quantitative interpretation of image features would be possible. The argusDB contains all information needed to quantitatively interpret Argus video images. This concerns the characteristics of the local site, video station, image processor, cameras applied, the available ground control points and geometry solutions, etc. All meta information is accessible on the basis of the Argus image filename (that is: epochtime, name of the station and camera number). The different components of argusDB are all inter-connected. For instance, on the basis of the site identifier, all video stations available at that site can found. On the basis of the station identifier, all cameras of that station can be found. On the basis of a camera identifier, all geometry solutions available for that camera can be found, etc. Using the epochtime of interest as an additional constraint yields a unique geometry solution, etc. | |

| − | The Argus | ||

| − | |||

== Merging images == | == Merging images == | ||

| + | Panoramic and plan view merged images can be composed by geo-referencing the images from all the cameras of an Argus station. Plan view images enable the measurement of length scales of morphological features like breaker bars and the detection of rip currents. A very useful post-processing tool is the Argus Merge Tool which enables to rectify and join together single time averaged Argus images. This results in a plan view of the nearshore zone (see Figure 2). | ||

| − | + | [[Image:mergeEgmond.png|thumb|800px|centre|Figure 2: Merged image]] | |

| − | + | The bright regions indicate regions of intense wave breaking hence the location of shallow water depth. In this way, morphologic features like the shoreline and breaker bars can easily be identified. Within the Argus merge tool it is possible to specify the condition at which moments images are collected to create a merged image. The condition can either be a tidal level or a wave height, both user-defined. Specifying a condition with wave heights bigger than 1.5 m produces merged images on which the bars are clearly visible by the smooth white bands at locations with a lot of wave breaking. These images are appropriate to analyse the behaviour of bars. When a low-tide level condition is specified, for example NAP -0.60 m, the rendered merged images are very useful to analyse the morphological features of the intertidal beach. | |

| − | + | ==See also== | |

| + | ===Internal links=== | ||

| + | Related articles: | ||

| + | * [[Argus video monitoring system]] | ||

| + | * [[Argus applications]] | ||

| + | * [[Argus image types and conventions]] | ||

| − | + | ==References== | |

| + | <references/> | ||

| − | + | {{author | |

| + | |AuthorID=13613 | ||

| + | |AuthorName=Cohen | ||

| + | |AuthorFullName=Cohen, Anna}} | ||

| − | [[Category: | + | [[Category:Coastal and marine observation and monitoring]] |

| − | + | [[Category:Observation of physical parameters]] | |

| − | [[Category: | ||

Latest revision as of 20:29, 10 August 2020

This article describes how data collected with an Argus video monitoring system can be converted from image to field co-ordinates. The article provides also an introduction into Argus databases and merging of images.

Contents

Geo-referencing of oblique video data

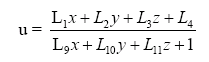

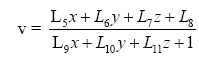

Quantification of image features like sand bars, shorelines and foam patterns demands the conversion of image coordinates (u,v) to field coordinates (x,y,z). This conversion depends on the camera location (xc,yc,zc), the camera orientation defined through three camera angles, namely the tilt, the azimuth and the roll, and the effective focal length, which directly relates to the camera horizontal field of view. The tilt, the azimuth and the roll represent the rotation with regard to the vertical z-axis, the orientation in the horizontal xy-plane and the rotation of the focal plane with respect to the horizon, respectively. The transformation of field to image coordinates is thus a rotation about the three angles and can be expressed by the linearised version of the collinearity equations, following standard photogrammetric procedures, as follows (Holland et al., 1997[1]):

The coefficients L1 to L11 are linear functions of the camera orientation (three angles), the camera position (xc,yc,zc) and the effective focal length. The inverse transformation from image to field coordinates results in a system of two equations with three unknowns. The z-coordinates are assumed in this transformation to match a certain horizontal reference level or the tidal water level (see Figure 1).

Standard photogrammetric procedures (Holland et al., 1997[1]) enable the transformation from real world coordinates (x,y,z) to image coordinates (u,v) on the basis of the collinearity equations (Eq. 1 and 2).The latter contain 11 coefficients L1-L11, which are described in Equation 3. In Eq. 3, (x,y,z) are the camera xyz-coordinates, (u0,v0) are the image center uv-coordinates, f is the effective focal length and λu and λv are the horizontal and vertical scale factors. The m-coefficients describe the successive rotations around the azimuth, tilt and roll (see Equation 4).

The theoretical formulations presented in Eq. 4 are valid for use with distortion-free lenses. Owing to the incorporation of λu and λv, the formulations embody a correction for the slightly non-squareness of individual pixels. The latter is induced by a minor difference in sampling frequency between the camera and image acquisition hardware, which causes a minor mismatch between the number of horizontal picture elements at the camera CCD and the number of columns at the image frame buffer, where the image processing system stores the video data.

Argus Database

The Argus database of meta information (hereafter referred to as argusDB) is a key-component of the Argus Runtime Environment. Without argusDB, no quantitative interpretation of image features would be possible. The argusDB contains all information needed to quantitatively interpret Argus video images. This concerns the characteristics of the local site, video station, image processor, cameras applied, the available ground control points and geometry solutions, etc. All meta information is accessible on the basis of the Argus image filename (that is: epochtime, name of the station and camera number). The different components of argusDB are all inter-connected. For instance, on the basis of the site identifier, all video stations available at that site can found. On the basis of the station identifier, all cameras of that station can be found. On the basis of a camera identifier, all geometry solutions available for that camera can be found, etc. Using the epochtime of interest as an additional constraint yields a unique geometry solution, etc.

Merging images

Panoramic and plan view merged images can be composed by geo-referencing the images from all the cameras of an Argus station. Plan view images enable the measurement of length scales of morphological features like breaker bars and the detection of rip currents. A very useful post-processing tool is the Argus Merge Tool which enables to rectify and join together single time averaged Argus images. This results in a plan view of the nearshore zone (see Figure 2).

The bright regions indicate regions of intense wave breaking hence the location of shallow water depth. In this way, morphologic features like the shoreline and breaker bars can easily be identified. Within the Argus merge tool it is possible to specify the condition at which moments images are collected to create a merged image. The condition can either be a tidal level or a wave height, both user-defined. Specifying a condition with wave heights bigger than 1.5 m produces merged images on which the bars are clearly visible by the smooth white bands at locations with a lot of wave breaking. These images are appropriate to analyse the behaviour of bars. When a low-tide level condition is specified, for example NAP -0.60 m, the rendered merged images are very useful to analyse the morphological features of the intertidal beach.

See also

Internal links

Related articles:

References

Please note that others may also have edited the contents of this article.

|